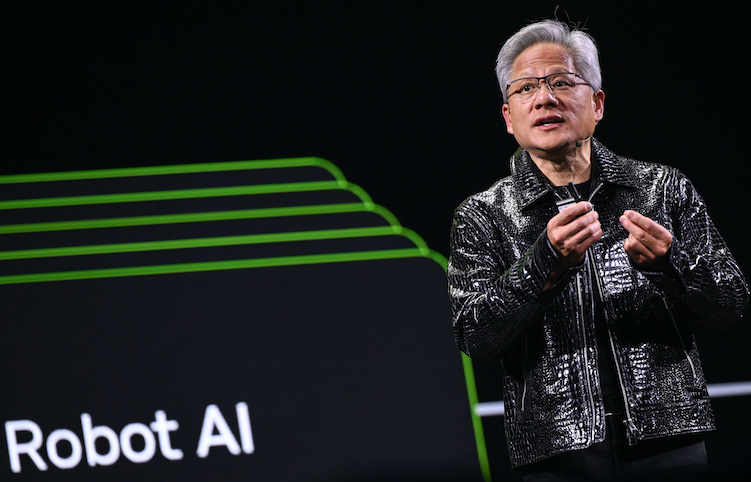

Nvidia CEO Jen-Hsun Huang is already talking about his next-generation computer chips, saying on Monday that they have five times the artificial intelligence computing power of the group’s previous chips when it comes to powering chatbots and other artificial intelligence applications.

in a speech Las Vegas Consumer Electronics ShowHuang, a former salesman, said the company’s next-generation chips are in “full production.”

The boss of the world’s most valuable company has revealed new details about a chip that will launch later this year. His executives told Reuters that the new chips are already being tested by artificial intelligence companies in the company’s labs, as Nvidia faces increasing competition from rivals as well as its own customers.

See also: Trump’s attack on Venezuela ‘could bolster China’s territorial claims’

Vera Rubin “The Pod”

The Vera Rubin platform, which consists of six independent Nvidia chips, is expected to debut later this year, with the flagship server containing 72 of the company’s graphics units and 36 new central processing units.

Huang shows how they can be strung together into more than 1,000 “pods” Rubin chip and say they can increase the efficiency of generating so-called “tokens” (the basic units of artificial intelligence systems) by 10 times.

However, Huang said that to achieve the new performance results, the Rubin chip used a proprietary data that the company hopes the broader industry will adopt.

“That’s how we were able to achieve such a huge improvement in performance even though we only had 1.6 times the number of transistors,” Huang said.

The competition for chatbot responses

While Nvidia still dominates the market for training artificial intelligence models, it faces more competition in delivering the results of those models to hundreds of millions of users of chatbots and other technologies — from traditional rivals such as Advanced Micro Devices and customers such as Alphabet’s Google.

Much of Huang’s presentation focused on how the new chips can do this task better, including adding a new layer of storage technology called “contextual memory storage” designed to help chatbots provide faster responses to long questions and conversations.

Nvidia also unveiled a new generation of network switches that use a new connectivity method called “co-packaged optics.” The technology is key to connecting thousands of machines into one, competing with products from Broadcom and Cisco Systems.

Nvidia said core weaving will be one of the first companies to have the new Vera Rubin systems, with Microsoft, Oracle, Amazon and Alphabet also expected to adopt them.

In other announcements, Huang highlighted new software that can help self-driving cars decide which path to take and leave a paper trail for engineers to use later.

Alpamayor Software

Nvidia showed off research late last year on a software called Alpamayo, which Huang said on Monday would be released more widely, along with the data used to train the software, so that automakers can evaluate it.

“We open source not only the models, but also the data used to train those models, because only then can you truly trust how the models are formed,” Huang said on a stage in Las Vegas.

Last month, Nvidia poached talent and chip technology from the company. StartupGroqincluding executives who helped Alphabet’s Google design its own artificial intelligence chips.

While Google is Nvidia’s main customer, its own chips have become one of Nvidia’s biggest threats as Google works closely with Meta Platforms and other companies to weaken Nvidia’s AI bastion.

In a question-and-answer session with financial analysts after the speech, Huang said the Groq deal “will not impact our core business” but could lead to new products that expand its product lineup.

Nvidia, meanwhile, is eager to show that its latest products outperform older chips like the H200, which US President Donald Trump has allowed to flow to China.

Reuters reported that H200 chipIt is the predecessor of Nvidia’s current “Blackwell” chip and is in high demand in China, which has aroused the vigilance of China hawks in US political circles.

Huang told financial analysts after his keynote that demand for H200 chips was strong in China, and Chief Financial Officer Colette Kress said Nvidia had applied for a license to ship chips to China but was awaiting approval from U.S. and other governments before it could ship.

- Reuters Additional editing by Jim Pollard