A Texas couple whose son died of an overdose in 2025 after using OpenAI’s ChatGPT tool to obtain drug information sued the technology company on Tuesday, blaming the AI platform for his death.

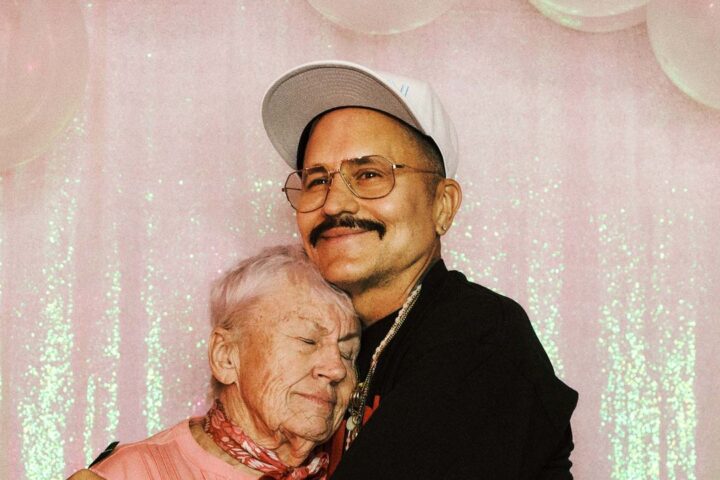

Leila Turner-Scott and her husband Angus Scott are looking to own OpenAI and its creators are responsible Their son Sam Nelson, who died at the age of 19, turned to ChatGPT for advice about drug use. They claimed in the lawsuit that the AI platform provided advice it was not qualified to provide, claiming that if not for ChatGPT’s flawed programming, Sam would still be alive.

Specifically, the platform advised the couple’s son that it was safe to take kratom, a supplement found in drinks, pills and other products, in combination with the widely used anti-anxiety drug Xanax, according to the lawsuit filed in California court.

“This is a heartbreaking situation and our hearts go out to the family,” OpenAI said in a statement to CBS News.

The company also said that Sam interacted with a version of ChatGPT that has now been updated and is no longer available to the public.

“ChatGPT is not a substitute for medical or mental health care, and we continue to enhance how it responds in sensitive and emergency situations based on input from mental health experts,” OpenAiI said. “The safeguards in ChatGPT today are designed to identify distress, safely handle harmful requests, and guide users to real-world help. This work is ongoing, and we will continue to improve it in close consultation with clinicians.”

In an exclusive interview with CBS News, Turner-Scott said that while she knew her son was using ChatGPT as a productivity tool and homework help. But she said she had no idea he was using it to guide medication and claimed the AI tool ended up recommending a deadly combination of substances.

She held OpenAI and its founders responsible for Sam’s death, claiming the company “bypassed security safeguards” and could have implemented restrictions to avoid such a tragedy.

“The chatbot has the ability to stop a conversation when told or programmed to do so,” Turner-Scott told CBS News. “…They unprogrammed it to do that and allowed it to continue to recommend self-harm.”

Angus Scott also said ChatGPT acted as a doctor in communications with his stepson, even though it was not licensed to provide medical advice.

“It provides information to the public about safety issues, drug interactions, all of that information,” he told CBS News.

Angus Scott said that without proper security protocols and more rigorous security testing, ChatGPT “could spread this knowledge in a way that is very dangerous to people.”

“It might start promoting psychosis. It might start misrepresenting the truth to people. While it’s trying to validate the user’s authenticity, it also destroys any chance of the user getting an educated opinion, you know, so it kind of distances them from reality,” he added.

Turner-Scott told CBS News that she believes her son, a rising sophomore in college, will support the steps the family is taking to hold the makers of artificial intelligence chatbots accountable for the potential adverse effects they can have on users’ lives.

“He didn’t want other people to be hurt like he was,” she said.